Tutorial Use Case: COCO Detection and Segmentation

Dataset

The COCO dataset is a pioneering benchmark dataset in computer vision for object detection and segmentation. The input is an image sourced from the public Web. It has a variety of targets but for this tutorial’s use case, the target is a complex data involving each object instance’s category label (out of 80 categories), bounding box, and segmentation mask (set of pixels).

The raw dataset files are sourced from the COCO dataset website.

There are 2 top-level directories:

data: This has all the raw image files stored under the “train2017” and “val2017 subdirectories, e.g., “000000000009.jpg”.

metadata: This has the Example Structure Files (ESFs) for both train and validation partitions and the original COCO annotations file for the latter alone. Please read API: Data Ingestion and Locators for more ESF-related details.

The specific metadata files are as follows:

coco-train.csv: A labeled set with the following column names: (id, height, width, file_name, annotations).

coco-val.csv: Also a labeled set with the same column names: (id, height, width, file_name, annotations).

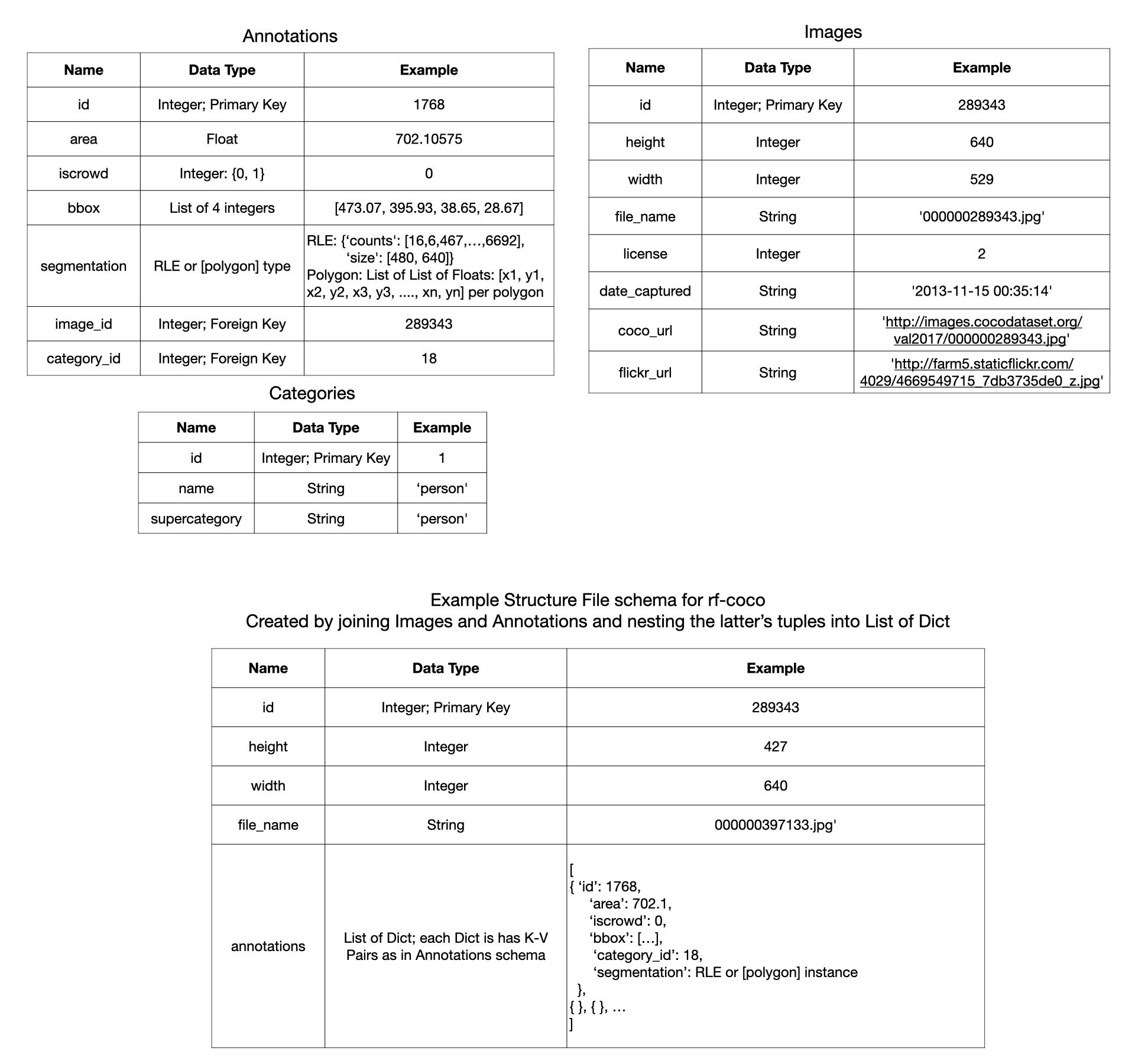

instances_val2017.json: The raw annotations file in its original format. It has 3 tables stored as nested dictionaries: Annotations, Images, and Categories. This file is used as is to enable the use of the

pycocotoolslibrary to compute evaluation metrics.

The following image depicts the schemas of all the tables involved.

The ESF is derived by a simple join-project query over the Images and Annotations tables.

This use case’s training process requires all annotations of a given image to be used together to calculate the loss.

Thus, they are stored as a list of dictionaries within the annotations column.

Model

This tutorial notebook illustrates hyperparameter tuning in a single run_fit()

with a popular model architecture for detection+segmentation tasks:

Mask-RCNN with a ResNet-50 backbone from the torchvision library.

This model was already trained on this dataset. But since we are continuing training on a smaller sample

with potentially different hyperparameters, you are likely to see diverse learning behaviors.

We also enlist a user knob to potentially reinitialize its weights in the create_model() function

to show from-scratch training behavior.

Such diversity is useful to help understand the utility of our Interactive Control operations.

Config Knobs

We perform a simple hyperparameter tuning with GridSearch().

We compare 2 different values each for the batch_size and lr (learning rate).

Feel free to modify the value of the “pretrained” user knob to compare more runs.s

Step by Step Code in our API

Please check out the notebook named rf-tutorial-coco-detseg.ipynb on the Jupyter home directory in your

RapidFire AI cluster.